Picture this: you go on Roblox, an online game catered to children and teens, where you can play and create your own games. And then all of a sudden, you see a re-creation of the 2019 Christchurch, New Zealand mosque shooting posted on TikTok.

The user shared the 1:04-minute time-lapse video that re-created the shooting carried out by Brenton Tarrant, who live-streamed the entire attack on Facebook. Years later version of this footage has been replicated with no effort to remove it.

THE GROWING PIPELINE OF HATE

These aren’t isolated incidents. These hate posts have opened a pipeline of hateful content that have been piling up on social media, to the point where your feed can be filled with extremist views, racist symbols, misinformation and disinformation directed at marginalized communities.

What starts at one post can quickly spread, especially on a platform that was built for young teens and adults. Where rapid sharing can alienate users and negatively impact mental health.

What was once a thriving arena for global communication is now a double-edged sword that has the capacity to reach people for good or evil.

Chris Tenove, a researcher at the University of British Columbia in Vancouver and the assistant director of the Centre for the Study of Democratic Institutions, says the emergence of social media has reshaped how people communicate.

“The biggest change has to do with lowering the barriers for people across kind of time and space to communicate with each other. Across… huge distances,” said Tenove.

But that accessibility has also opened doors for hate, racism, misinformation and disinformation.

Tenove says social media has changed how people express their anger. He gave an example of in the past, if someone disliked what a politician said or did, they expressed their frustration by yelling at the television.

Today, that same energy can be directed towards the person. Anyone can look up a politician online and send their hateful messages in the exact tone that was once reserved for the living room.

ONE SIDE OF THE SCREEN

For some creators, the initial start to their online presence felt simple and harmless, unaware that the dark side of likes and comments would eventually reach them.

Dr. Nahla Al Sarraj, a Syrian-Canadian, fifth-year psychiatry resident, started posting about her content creation journey on TikTok towards the end of 2020 and early 2021. Her first push posting on social media happened after ending a difficult relationship.

She leaned into social media as a way to share what she knows, focusing on mental health topics such as relationships, abuse, trauma and healing. Since 2020, she has amassed over 300,000 followers on TikTok and more than 160,000 followers on Instagram.

“I thought, oh my gosh, if I can teach other people the things that I just learned, that would be incredible. Like, I wish I had known these things before I got into the situation. … but I want to disseminate this knowledge that I now have, and I think that it will help other people, either get through the same situation, and learn so that they don’t stay in a situation like that,” said Al Sarraj.

As her content gained traction, her videos drew people seeking support for mental health. But everything changed in October, 2023,when Al Sarraj started posting videos about Palestinian rights.

“The hate is entirely directed at me because of my public stance on Palestine, and my posts about Palestinian liberation and against genocide,” said Al Sarraj.

What was once a supportive community for her turned against her. Her inbox is now flooded with violent, explicit and personal messages.

One of the messages she received told her to harm herself and spewed hate about her faith. “Kill urself, or go to gaza and get raped by hamas. Satan is waiting for you in hell with ur pedophile prophet,” read one of the messages she received.

Other times, she has been accused of inciting terrorism.

A WIDER PROBLEM

Steven Zhou, the spokesperson for the National Council of Canadian Muslims (NCCM), says that over the past couple of years, the organization has seen an increase in online hate complaints.

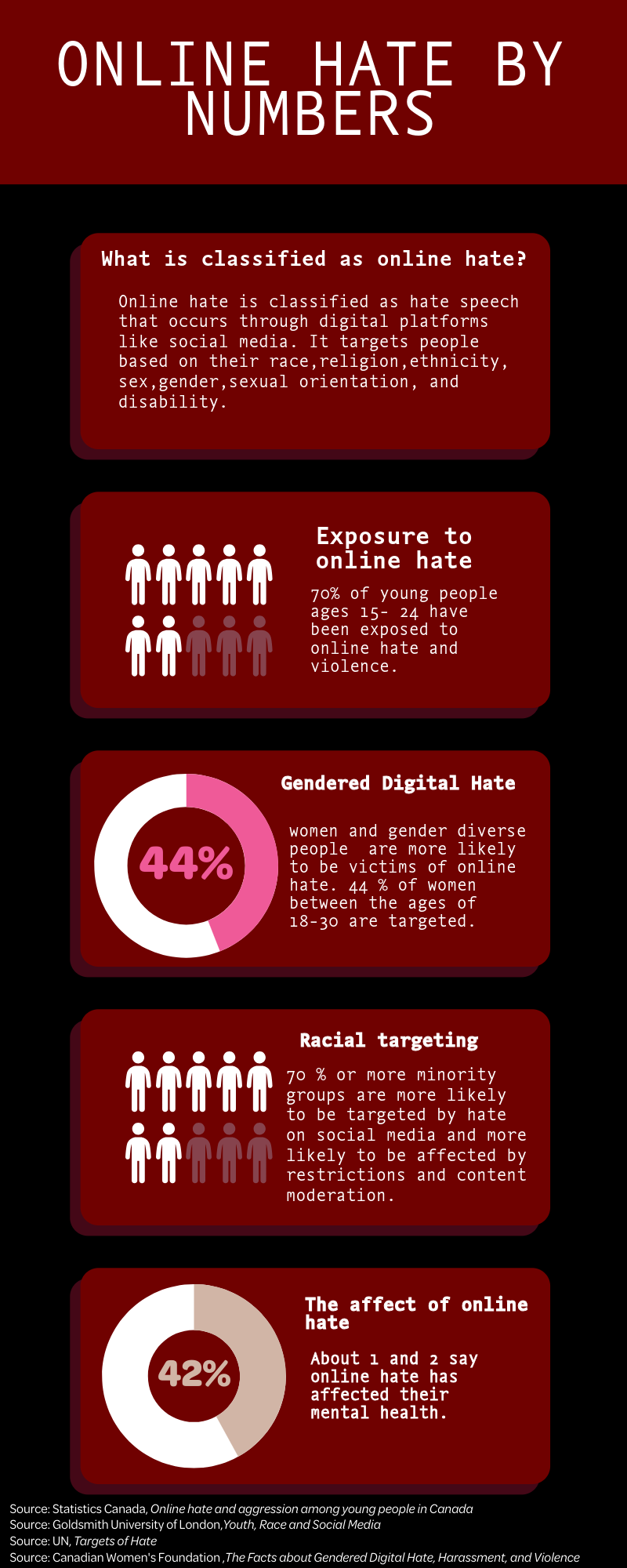

Statistics Canada has reported that seven in 10 young people between the ages of 15 and 24 have encountered online hate. Young women are more likely to be targeted as victims.

Another study out of Goldsmith University of London says members of racialized groups see online hate at least once a week. Half have reported that it has affected their mental health.

Hate comments are not the only thing Al Sarraj has to navigate. Content moderation has become another obstacle for her.

Al Sarraj says people often mass-report Palestinian-related content on TikTok to see what will get flagged. In her case, she got flagged for “false advertisement.”

She said people will keep reporting her until something is removed. Once a video is taken down, the creator has to file an appeal. If you miss that appeal window, your video will be permanently taken down, and your account will also receive a warning.

THE DIGITAL TRIAGE

A study done at Stanford University found that people of colour, when having conversations about racism, are more likely to be targeted on social media, resulting in their posts being removed.

They are three times more likely to be flagged by a human when users speak about their experience of racial discrimination, “violating platform guidelines,” compared to users who spread hate are less likely to be reported. This has a detrimental effect, isolating users and creating an unwelcoming environment offline and online. But the moderation does not seem to flow the other way.

“Like, there have been times where, rather than blocking somebody, I’ll be like, okay, I’ll actually report the comment that they left, that was so heinous and discriminatory and clearly hate speech. And I’ll do it just so I can at least get that report out there, and it will come back, and it will say, it didn’t break community guidelines,” said Al Sarraj.

A UN report says 70 per cent or more of minorities have been targeted by hate crimes and hate speech on social media. The report also states that while minorities are targeted by hate crimes, they are more likely to face restrictions and content moderation on social media.

This creates a widening gap where communities facing online harm are the ones being suppressed, while other comments continue to circulate unchecked.

WHAT SLIPS THROUGH THE CRACKS?

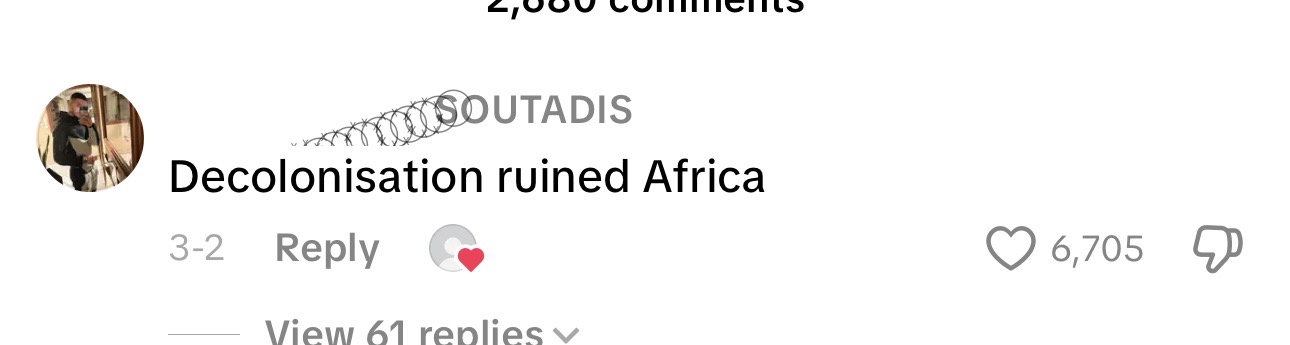

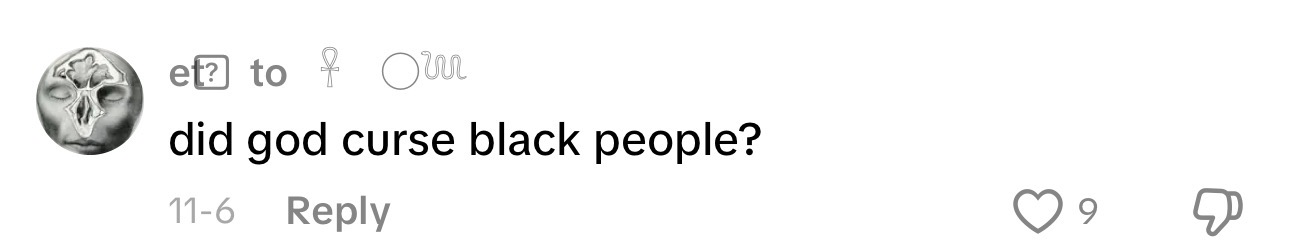

Many comments and videos with racist undertones still make their way online. A wave of antisemitism is prominent on social media, where, for example, a user pleads, “painter come back,” a reference to Adolf Hitler, who was an amateur artist.

Another account asserts, “doesn’t matter if it was 271k or 6m it still wasn’t enough.” The term “271K” is a holocaust denialism, attributed to the German Red Cross, claiming the holocaust, suggesting resulted in far fewer Jewish deaths than the officially recognized figure of 6,000,000. All these posts have evaded moderation and are still there to be read on social media.

Islamophobia has also been prominent in online spaces, such as creating memes of the Christchurch shooting. There is still footage of the shooting on TikTok that has not been taken down. At the time of the shooting, many users even cheered on the gunman, posting the dead were “51 Kebabs” or “Brenton is a hero.”

These posts are not hard to find. Many accounts use trending audio on social media platforms like TikTok and Instagram, playing songs like L’Amour Toujours by Gigi D’Agostino, which is notoriously used by “far right” social media users as a hidden racist message.

Last May, a video emerged of far-right groups at a bar in Sylt, Germany, singing a xenophobic song. Most of the bar patrons were associated with the Alternative for Germany (AfD) party, chanted “Deutschland den Deutschen, Ausländer Raus!” which translates to “Germany for Germans” and “foreigners out.” The video shows another person saluting with his right hand and mimicking Hitler’s mustache. Far-right groups have hijacked 2000s dance-pop and phonk, a genre rooted in hip-hop and trap and added racist lyrics. On Spotify, users have created playlists with “right-wing” songs that have been rebranded to insight hate.

THE PULL BACK FROM RESPONSIBILITY

In early 2025, Meta announced it would end its contract with third-party fact checkers and replace them with user-generated “community notes,” where moderation is left up to the users, similar to Elon Musk’s X. Critics say Meta did not consider the human rights impact of switching from third-party fact checkers to community notes.

Human rights group Amnesty International decried the measure, stating, “Meta’s algorithms prioritize and amplify some of the most harmful content, including advocacy of hatred, misinformation, and content inciting racial violence – all in the name of maximizing ‘user engagement,’ and by extension, profit.”

A former Meta employee echoed this concern, warning, “I really think this is a precursor for genocide… We’ve seen it happen. Real people’s lives are actually going to be endangered.”

In 2017, Facebook contributed to the incitement of violence against Rohingya Muslims, according to a UN report.

“We do know that social media platforms are involved in some cases in hate groups or in more violent political groups communicating with each other, sometimes bringing in new adherents. And then communicating with each other and then mobilizing for violent acts…often in harder to trace spaces like private telegram groups and other things,” said Tenove.

X is an infamous case where individuals who were banned, like President Donald Trump, were reinstated on the app.

Mark Zuckerberg, the CEO of Meta, said the reason for abandoning substantive moderation is “Fact checkers have been too politically biased and have destroyed more trust than they’ve created,” said Zuckerberg.

The CEO of Meta also acknowledged that these changes from third party to community moderators is simply a “tradeoff” and he acknowledges there will be a rise in hate content, but it will also reduce the number of “innocent posts taken down.

“It means we are going to catch less bad stuff, but we will also reduce innocent people’s posts and accounts that we accidentally take down,” said Zuckerberg in a video statement.

Tenove says the biggest driver behind the increase in hateful content is weak or a limited content moderation.

“We have seen that the platforms have backed off on content moderation. This is explicit by X, and they have allowed back on voices who, you know, frequently were banned or were under temporary bans because of hate speech…. And so … X has decided to make itself more toxic, more open to hate space,” he said.

He said any emotional or negative messages tend to grab users’ attention, making it more likely for users to react and engage with that content.

“Algorithms just tend to reward material that gets engagement,” said Tenove.

HIDE AND SEEK

Despite how hate is able to bypass moderation systems, users do it through the use of “dog whistles.”

Dog whistle communication is coded messages that seem innocent to the general public but have a specific meaning that is often extremist or derogatory that target a specific group. However, dog whistles are not always harmful.

Julia Mendelson, an assistant professor at the University of Maryland in the College of Information, studies how computational methods, such as communication, linguistics and political science to understand implicit language online.

She says when it comes to coded messages, coded language can be a valuable tool for communities like Women’s Rights, 2SLGBTQIA+ to speak to one another without being harmed, but when misused, it can cause far more harm than good.

“Dog whistle communication can be really dangerous,” she said.

Another way users can evade moderation is by using emojis, shortening words or using special characters as a means for hidden messages. One example is two lightning bolts, which symbolize SS or Schutzstaffel, a paramilitary group under Hitler and the Nazi party in Germany. Numbers like 14 and 88 also carry white supremacist meanings.

Mendelson has compiled a glossary of 340 hidden dog whistles she collected over the past couple of years.

ONLINE HATE BECOMES OFFLINE VIOLENCE

Zhou, from the NCCM, believes online hate can escalate to physical violence. He gave the example of Nathaniel Veltman, a man convicted of murder and attempted murder, who drove his truck into five Muslim family members in Toronto a few years ago. The NCCM said his radicalization was a direct result of online consumption.

Al Sarraj describes the summer of 2024 as “the worst time of her life.” She was doxed with details about her personal life. Suddenly, intimate information such as where she worked, started to show up in the threats she was receiving. Al Sarraj wanted to keep her privacy. She felt exposed and unsafe.

“What that did for me is basically remove any sort of shield or barrier from people accessing me,” said Al Sarraj.

That harassment didn’t stop. By November of 2025, she lost her job for being outspoken about Palestinian rights. She was devastated, losing the job she was devoted to, where she loved caring for patients.

She became less outspoken, because the tone and reaction of some of the comments changed and became increasingly threatening and scary.

“I do kind of have that inner pessimist in me that is like, no, they won because they wanted to silence me,” said Al Sarraj.

BLOCKING THE HATE

The Council of Europe’s No Hate Speech Week 2025 shows a rising pressure in the digital era: hate is no longer in person. Now it appears to look “professional,” and it is quietly rooted in systems that shape what people see and share. Martin Mlynar, a board member of the Council of Europe’s No Hate Speech Network, an organization dedicated to combating hate speech, spoke about this digital landscape.

“Hate is evolving – it now often wears a suit and is legitimised by those in positions of power, hides in an algorithm and infiltrates media in plain sight. We need robust litigation, ethical regulation of AI, and responsible journalism. Not just to react, but to prevent, to empower, to protect. Freedom of expression must not become a shield for organized dehumanization.”

The Council of Europe’s 2025 campaign argues that online hate needs more than just awareness. It needs structural protection, legal measures that will prioritize accountability.

For Mendelson, tackling online hate is more than just removing harmful posts or comments. Instead, it’s about reshaping social media as a whole. Trained experts and moderators, and community members should moderate the content in another way, which is educating people, helping people respond to hate speech, informing people, replying to users who spread misinformation, and building an informed and resilient community.

Al Sarraj, was once very active in her activism. The weight of relentless online hate has forced her to take a step back for the sake of her mental health. For now, she is focused on surviving, recharging and finding the strength to eventually return to the movement she once advocated for.

This highlights a concerning reality: users who speak up will face consequences of being restricted while hate continues to flourish and remain unchecked.